It looks like this:Įquation 2.3 says that the predicted value of Y is equal to a linear function of X. If we take out the error part of equation 2.2, we have a straight line that we can use to predict values of Y from values of X, which is one of the main uses of regression. The equation for estimates rather than parameters is: We usually have to estimate the parameters. If the slope is -.25, then as X increases 1 unit, Y decreases. If the slope is 2, then when X increases 1 unit, Y increases 2 units. It denotes the number of units that Y changes when X changes 1 unit. The symbol b describes the slope of a line. The symbol a represents the Y intercept, that is, the value that Y takes when X is zero.

The symbol X represents the independent variable. The portion of the equation denoted by a + b X i defines a line. Note that there is a separate score for each X, Y, and error (these are variables), but only one value of a and b, which are population parameters. Where Y iis a score on the dependent variable for the ith person, a + b X i describes a line or linear function relating X to Y, and e i is an error. Scores on a dependent variable can be thought of as the sum of two parts: (1) a linear function of an independent variable, and (2) random error. It is customary to talk about the regression of Y on X, so that if we were predicting GPA from SAT we would talk about the regression of GPA on SAT. The X variable is often called the predictor and Y is often called the criterion (the plural of 'criterion' is 'criteria'). It is customary to call the independent variable X and the dependent variable Y.

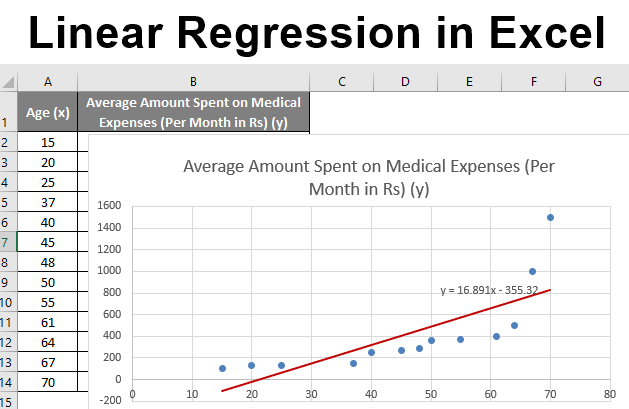

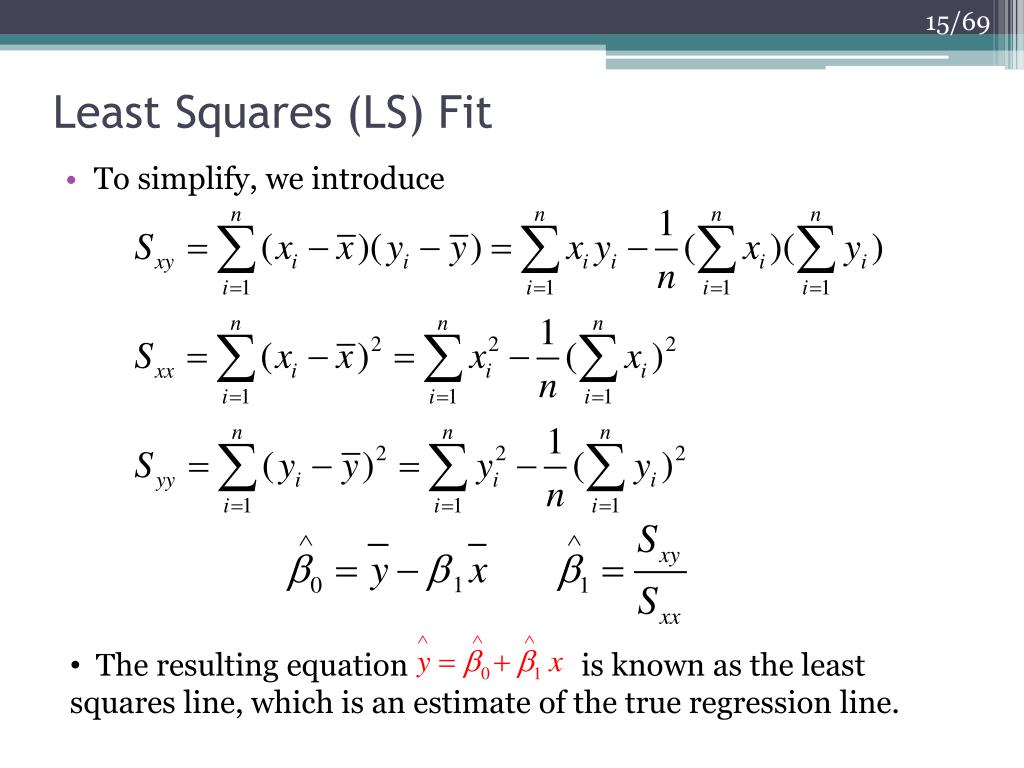

The linear model assumes that the relations between two variables can be summarized by a straight line. Why does testing for the regression sum of squares turn out to have the same result as testing for R-square? What does it mean to test the significance of the regression sum of squares? R-square? How do we find the slope and intercept for the regression line with a single independent variable? (Either formula for the slope is acceptable.) What does it mean to choose a regression line to satisfy the loss function of least squares? How do changes in the slope and intercept affect (move) the regression line?

According to the regression (linear) model, what are the two parts of variance of the dependent variable? (Write an equation and state in your own words what this says.)